In 2020, Topaz Labs introduced their new product ‘Video Enhance AI’ for the purpose of upscaling and enhancing videos. This review is based on my personal experience with Topaz Video Enhance AI, and the newly released version 2 – with additional content for version 3.

Video upscaling isn’t a new technology. High resolution televisions and video players have been doing it for several years. Those products do the upscaling live on the fly. Topaz Labs Video Enhance AI will convert your video file to a higher resolution file and allow use on computers and televisions which may not feature upscaling technology. It also cleans up some of the noise sometimes introduced with upscaling. This latter point will come up again several times in my review of Topaz Video AI.

Pet Peeve

Lots of products and organizations are using the term Artificial Intelligence (AI). It’s the current catch phrase and in most cases it is not AI, but instead just tons of programming.

A true artificial intelligence learns as it goes. It analyses, finds patterns, and learns how to recognize and process data. To be clear, data can be any type of information whether language or numbers, visual, audible, etc. Don’t be confused about the stereotypical data used in computer lingo.

Programs like Video AI and similar offerings don’t use true artificial intelligence. Instead they simply have a significant amount of programming code to process the data stream piece by piece in the sequence which it’s presented. So don’t let the over-use of “AI” influence any product evaluation and usage. It may be an advanced program, but it isn’t actually Artificial Intelligence.

Short Story – Spoiler Alert!

If you want the general basis of this review, then here it is. Topaz Labs Video Enhance AI upscales and enhances reasonably well, depending on the source. Don’t expect miracles and don’t expect rapid results. The product is expensive for its limitations and short-fallings.

For a more detailed explanation of my review, read on.

System Configuration

Each computer system is going to have different results with this application, depending on its hardware and software configuration. My review of Topaz Labs is based on my system configuration; the basics are as follows:

Dell XPS 8930

Windows 11 Professional (64-bit)1

NVIDIA GeForce GTX 1070

Intel UHD Graphics 630 (Built-in)

Intel i7-8700 3.2 GHz

32 GB RAM

500 GB NVMe Flash drive (Operating System and Application Installs)

1024 GB NVMe Flash drive (Scratch disk and temporary storage)

Upscaling Technology

When the source video is good enough quality, the upscaling is not bad to pretty good. In the example below from Porky’s2, the source was a Widescreen DVD rip (720×404) upscaled to HD 1080p.

Since the source is a relatively clean video from a DVD of the original film, the standard Gaia HQ processing model is fairly reliably to scale the video up to 1080P.

The Artemis model sharpens well, but tends to introduce artifacts (worming) in soft areas. It is only really good upscaling a source that is very good quality. Like the below example from Oz the Great and Powerful3. The original recording is with high definition digital equipment. As a result, even though the source file for the conversion is DVD rip (720 x 404), a clean upscale is much more possible.

Video Enhance AI doesn’t appear smart enough (remember it isn’t true AI) to reference the previous few frames when it upscales a frame. When a key subject has moved in the frame, if the previous frames were referenced, the system could use the prior data to help ensure that there is consistency with how it handles the subject in its new location. Instead, each frame is individually rendered, independent of prior or future frames, resulting in variations and sometimes artifacts of the same material as it moves on screen.

The example to the right, also from Oz the Great and Powerful, demonstrates the artifacts created with the Artemis model. It is evident in most of the soft focus areas, as well as around small details like Oz and Theodora running in the distance.

As you see, it sharpened details, like Oz’s hat and bag, but notice the artifacts around his shoulders and seat. Also the edges of the soft focus vegetation that frame Oz and Theodora have been over-processed. The result is similar to poorly processed dithering.

Video Enhance AI offers low-quality and computer quality (Gaia only) rendering models, but they tend to super enhance aberrations and high contrast areas at extreme levels. I have not found a good use for these other models yet.

More examples of this issue will be evident later in this article, especially when discussing low quality non-commercial video.

Face Rendering

Video Enhance AI needs to include facial recognition and modified algorithms for faces. Close faces (larger on screen) upscale very well, but distance faces (smaller detail) often end up deformed or blurred out.

In the below example of just Oz as he arrives at the land of his namesake, you can see the aberrations that are my main complaint with the Artemis model.

Look at his nose and edges of his face and hair. The damage caused to these areas reminds me of poor de-interlacing, except that the lines for interlacing are horizontal not vertical. In the softer areas of the scene, like on the left and right geographical structures, you can see aberrations that I refer to as digital worming. I used to see this effect with lower quality digital cameras from 20ish years ago.

With the newer models, scaling from 720p to 1080p, the face deformities are less common, but there are still some cartoonish fakeness to teeth and eyes. Scaling 720p to 2160p is worse. As shown in the example to the right.

Ignoring the overall softness in this example, you see the eye to our right actually looks reasonable, but look at what happened to the other eye. It is greatly exaggerated. The mouth rendering has raised (opened) the mouth slightly on only one side, or just exaggerated the lips over there. The top, front of the hairline was refined some, while the rest of his hair is left soft. The deformities to the ears can only be appreciated with moving video, which I’m not going to bother putting in this article.

At least the newer models are an improvement over the deformities created by Artemis, which were not improved in second release.

Home Video

One of the things that Topaz Labs is touting about Video Enhance AI, is that it is good for restoring low quality videos, like home movies. Using the average home movie, which often comes from tape formats, the improvement is marginal at best, especially for small details.

Noise Reduction

As part of the upscaling, Topaz Labs Video Enhance AI performs noise reduction along with its scaling and sharpening. With the Gaia model this eliminates a lot of the digital artifacts from the scaling process and even helps with noise that was on the source. This results in a cleaner video image that is easier to watch. The Artemis model has a tendency to enhance artifacts that were on the source video, often to ugly and distracting levels.

Unfortunately, the noise reduction can generate a slight haze over some video content, and details of small items are often lost. This is a normal problem for noise reduction, even with still photography, so I can’t fault it too much.

In this 1989 high school presentation of Grease4, you can see that the video was very low quality. Every single detail is soft, with noise.

When run through the Artemis model, the faces became ugly lines and boxes. The harsh shadows from the stage lights became extremely exaggerated. I should have saved a sample with that model, but my evaluation copy of Topaz Labs Video Enhance AI expired and I don’t have the Artemis sample to show.

As pointed out earlier, the Gaia model reduced the noise on this low quality video, but it also introduced a haze. It also softened both character’s facial features while starting to exaggerate the harsh shadow on the right side of Sandy’s skirt.

Oddly enough, the Gaia and Artemis models that were designed for low quality sources gave the worst results.

The newer models – Theia, Gaia and Dione – didn’t do any better on lower quality source video content. In some cases, they were worse than the older models. The only exception is the Dione Interlaced TV model, which didn’t over-enhance shadows.

Text Rendering

Video Enhance AI falls way short on text rendering with lower quality sources. Title screens, credits, and especially the small text at the bottom, all get boxy and blobby. Topaz Labs needs to improve their text rendering when upscaling video. This is very similar to the issue with distant faces.

This problem is evident on this sample from the title credits of If You Could See What I Hear5. The title has an awkward shadow-like outline with the bottom of each letter repeated. The Copyright notice at the bottom is practically illegible.

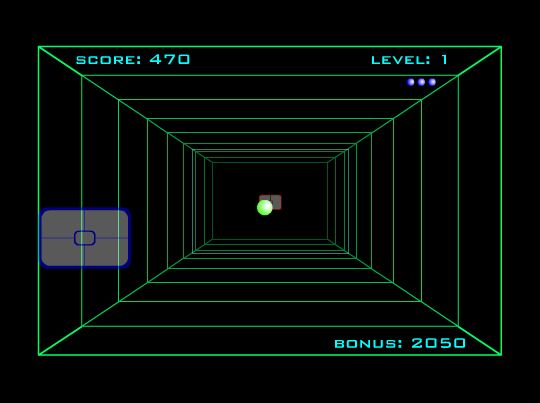

New Proteus Model

A recent addition to Topaz Video AI is the Proteus model. For a high quality source video, it does a fairly good job scaling up to 1080p. In the below example, the left is the source file at 480p and the right is the processed upscale to 1080p. Many of the details are sharper than the source – it actually looks a lot better than the original.

Here is the same frame scaled up to 4K (2160p). To keep the view the same, we are zoomed out to 50%. As you can see, a bit more noise is introduced but the ultimate result is still reasonable.

Here it is at 100% – the introduced noise is more apparent. When watching on a 4K television at a fair distance from the screen, this noise might not be so obvious and may actually look natural.

Processing Speed

One of the important things to note is that the video processing speed is dependent on the amount of upscaling being performed and processing power of the CPU/GPU of the computer. It is best to use the GPU (Graphics Processing Unit) rather than the CPU (Central Processing Unit), because the GPU is designed for graphics rendering and can perform the task substantially quicker. Using the GPU also frees the CPU for performing other unrelated tasks – you know, multi-tasking. For fastest results, it would be great if Topaz Video Enhance AI would use both GPU and CPU at the same time. Currently, we can only use one or the other.

On my system, a feature length film (about 90 minutes) being upscaled from DVD rip to 1080p takes about 38 hours. You read that correctly – a day and a half to upscale one movie. This is something that my television does on the fly, real-time. And my television has substantially less processing power and memory than my computer.

With version two of the software, this same video conversion ranged from 43 to 72 hours, depending on the model used. They managed to make the software run slower. Even the quick preview takes dramatically longer to load – even when we ignore the couple times the software crashed during processing.

Version three of the software introduced multi-GPU handling, but I found on my system that it is slower than using just one CPU. I suspect that this is because my built-in video processor is a lower quality than my add-on video controller. I’m not sure how exactly Video AI distributes the work, but I suspect the issue is the software has to wait for my lesser GPU to finish before it assigns more work to both GPU. Bear in mind, this is just speculation from a technical user perspective.

Output Size

The size of the final file is quite large, averaging 5-6 GB for a 90 minute film in 1080p. This is about 1-2 GB larger than usual, so I run the file through an optimizer which maintains quality of audio and video, while reducing the file size.

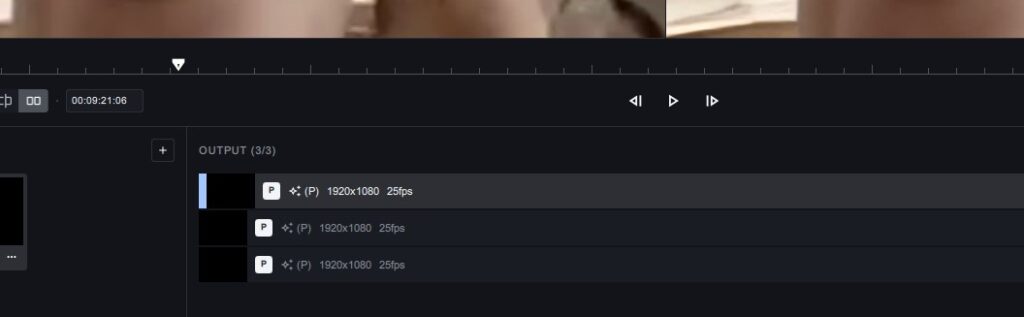

Preview Review

One of the best improvements to version 3 of the software is the Preview history. The software retains your previews so that you can make adjustments and reprocess the preview segment.

Clicking between each preview, it is much easier to see the differences. As each preview is clicked, the settings detail changes (grayed out) to show how that preview was configured. This makes it much easier figure out the next adjustment to make.

It would be nice if Topaz would link the previews … that is, if I pause on one frame of a preview, when I click on another preview of the same segment, show that same frame and positioning. Right now we have to manually adjust the view position and select the frame for comparison.

Topaz Video Enhance AI Review Summary

I use Topaz Labs tools in my photography work. Many of them, like DeNoise AI and Sharpen AI are excellent tools. Topaz Video AI has grown a lot since its initial release, but it still has a way to go.

At a retail price of $299, Topaz Video Enhance AI is expensive for its limitations. If Topaz Labs were to drastically improve facial and text handling, as well as processing times and perhaps reduce finished file size, then the product would be worth that cost.

Version 2.6 Update (2022 January 23)

At the beginning of 2022, Topaz had a sale which made the price worthy of my purchase. Version 2.6.2 is the current version, and I am running an upscale operation even as I type this update. Some of there newer features give greater controls for the scaling operations and cleanup, but they have done nothing to improve processing speed and the artifacts discussed in this article still exist.

Version 3.0 Update (2023 February 14)

Throughout this article, there are several modifications to reflect improvements to the product as of my last available version 3.0.12. I refuse to pay the high price to upgrade my maintenance license and get the current minor release version. Perhaps when they release the next major version and offer a good discount, I’ll re-evaluate fully.

Disclaimer

In the United States, and many other countries worldwide, it is technically not legal to rip copyrighted materials from their retail source (ie, DVD and Blu-ray), due to the digital millennium act. Even for making backup copies of the content that you lawfully purchased.

United States Title 17 specifically indicates that it is illegal to reproduce a copyrighted work. But there are a lot of gray areas around that statement. Consider this – you are reading this blog post on your computer. (Don’t forget your smart phone and tablets are computers too.) Your computer creates a locally cached copy of this article on itself to make review easier, especially if you go offline. According to Title 17, your computer just broke the law on your behalf – oopsy. We can also rip our music CDs for the purpose of our personal use, playing on our mobile devices. Is it legal? Well according to Title 17 it is copyright infringement.

Giving or selling a copy of your ripped content to someone else is where the gray area becomes black and white. Redistribution of copyrighted content is absolutely illegal.

The copyrighted works which I used for this evaluation were ripped purely for this exercise in evaluating the Topaz Labs tool, to exhibit the difference between upscaling professional video and home video – which tends to be of lower quality. The use herein are protected under “fair use” rights.

Anyone wishing to copy and upscale copyrighted works must obtain permission and licensing from the copyright owner, if it is not themselves.

Thank you for this article. What alternative would you recommend for video enhancement?

To date I have not found a video upscaling product which I am satisfied with the results. If Topaz’s tool were better priced, I’d tolerate its mediocre quality. I am in the process of evaluating a product by ACD Systems. I expect to post a review in a few weeks.

Thank you for your prompt response and I look forward to read your next review. I’ll hold on purchasing Topaz.

Thank you for this. I am doing the trial currently, and this is my non-expert opinion so far.

1) Generally, I would say the output is….ok compared to source, but I dont quite to seem to quite get the quality I see implied in some reviews. < lack of know how probably)

2) File output sizes for 1920x are MASSIVE. I mean, excessive. For example, a 72.5 mb 320×240 source, converted to 1920×1080 results in a nearly 1.5gb file!

3) The explanations for the various models is there, but, as something of a novice, the tooltips are not very helpful when it comes to deciding which model you should use for a given file.

4) Related to 4, you end up having to run multiple runs on the same file, using various settings too hopefully find the optimal outcome. Even for small source files this can eat up a lot of time.

5) You only get an estimate of project time AFTER you start the upscale. There is no way for it to give any of kind of estimate BEFORE you start.

For $199, or 299 even, I was hoping for either better output, or, less riduculous output sizes. I can only imagine what sort of file a 10 min 70 mb file Topaz will create if I chose the 4k setings.

The resolution and file size of your source video isn’t going to have much impact on the file size of your scaled version. It is normal for a 1080p file to be about 2-3 GB per hour. This will vary depending on the audio encoding (Stereo versus Surround, bit rate, etc.). I agree that Topaz does make the files much larger than necessary. I’ll usually send the files through ACDsee Video Converter, or some other encoder/converter, to optimize the file.

I found the Preview mode in Video Enhance AI gives a fair representation of how the final output would look. This allowed me to mess with the settings without committing an extreme amount of time for a full conversion.

I get great results but I use it in a manner that makes most sense for highest quality output. I use at least 2tb of disk space for working files(only 16bit TIFF and I render in Adobe After Effects – final conversion in Handbrake) and then my final output for a feature film(90mins) will be in the 4-5gb range @ 7000 bitrate 1080p – and that is small for HQ HD but it looks pretty good – especially compared to what I had to work with. Some of the obscure films I’ve done could be passed off as blu-ray. Really, most of my issues arise from de-interlacing and IVTC.

i guess these are just example clips of what topaz enhance does but why bother with these films when you can purchase then or even download them with perfect qaulity already?

Topaz demonstrates their capabilities using self-created content (assumption) which allows them to ensure the results they want to demonstrate. Excluding the high school play, I used popular films to use as a common reference. This production film content provided perfect examples of how the product handles professionally recorded and edited content, since it was falling short on amateur and home-grown content. Unfortunately, it fell short on the professional content as well.